Rate Limits Are a Pricing Bug

How Orchid GenAI Fixes AI’s Economics

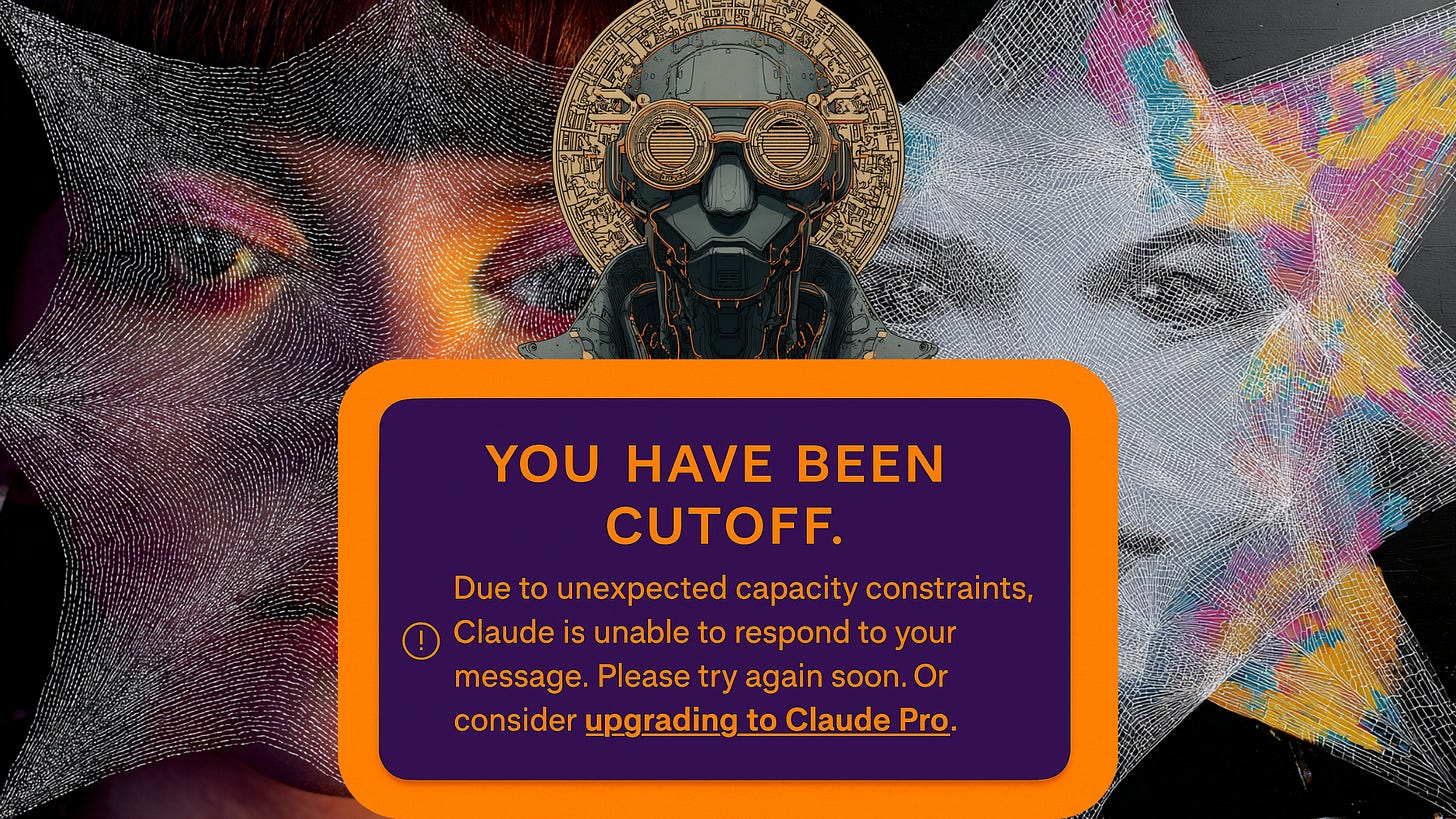

Vibe coding is simple: sit down, open your favorite AI model, and fall into a groove. Two hours later, mid-way through a problem, the session stops. “Due to unexpected capacity constraints, Claude is unable to respond to your message. Please try again soon.”

You quietly curse the hyperscalers as you endure the frustration of modern AI (despite its merits!). These limits are the predictable outcome of a pricing model misaligned with how people actually use these systems.

The good news: there’s another way. Orchid GenAI’s modular marketplace, powered by nanopayments, shows what happens when you align costs and value at the margin.

Why Rate Limits Exist

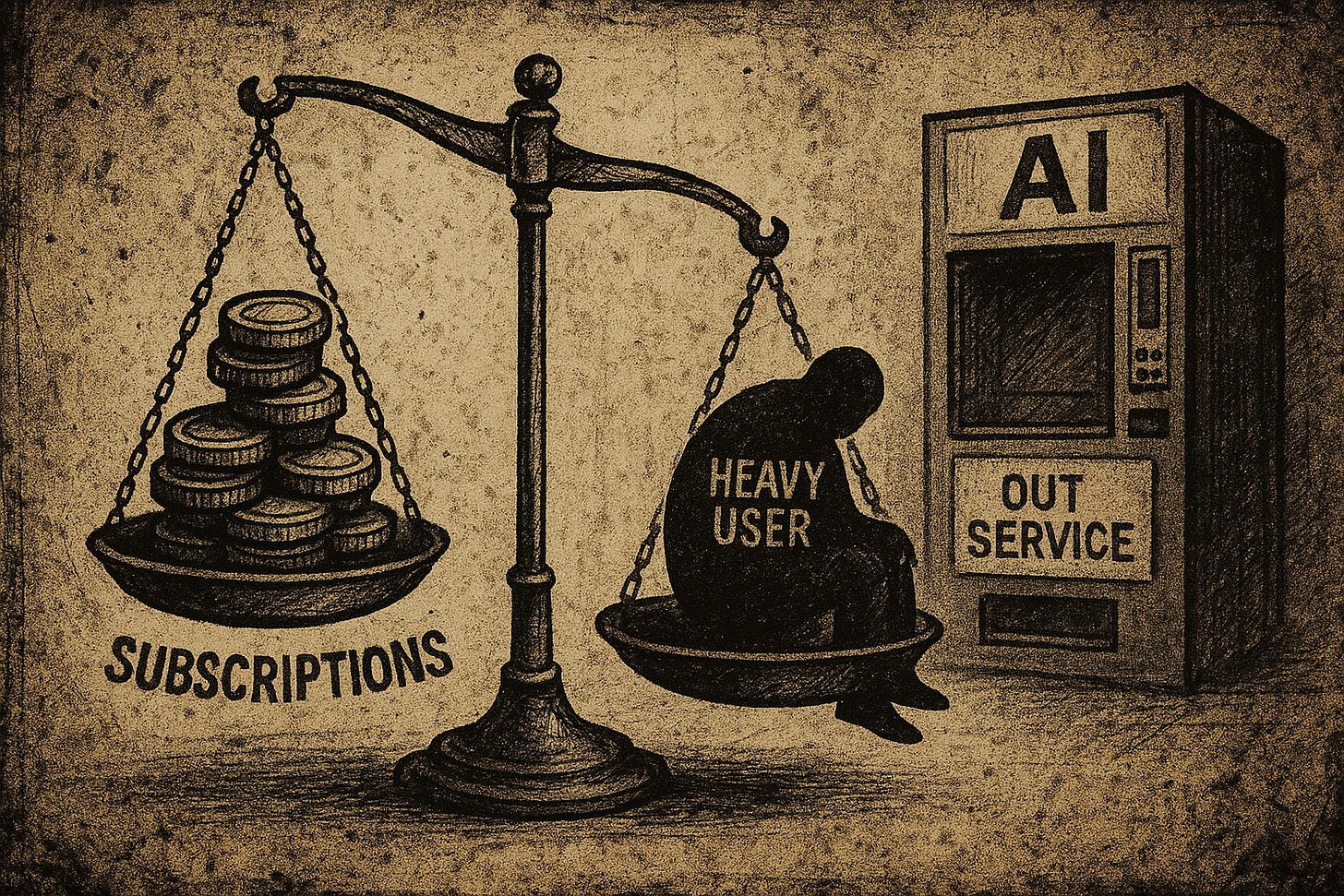

Rate limits are less about punishing users than protecting providers. Running inference on a large language model is neither cheap nor free. Every token has a cost, and the inputs range from compute cycles to electricity and server resources. Capacity is finite. When pricing is structured as a flat monthly subscription, providers have to smooth those real costs over an “average” user base.

The problem is simple: heavy users break the math. A handful of power sessions can burn through more than the subscription fee covers. To protect margins, some providers cap usage. If demand spikes across many customers at once, caps also become a crude way to ration compute capacity.

Subscriptions were built for predictable consumption: streaming video, storage, or even some SaaS like productivity software. Generative AI, however, breaks the mold. Usage is spiky, unpredictable, and highly uneven (especially when it’s multi-modal). For some users, it’s a sidekick, whereas for others, it’s a full-time co-worker. Trying to fit that into a one-size-fits-all plan creates the experience we all know: pay, use, then get cut off.

The Misalignment of Subscriptions

Subscriptions create three types of misalignment.

Opaque economics. Users have no way to see how their activity maps to costs. Providers can only hope the average works out. 1.

Incentives to ration. Providers lose money when heavy users consume more than planned. Caps, throttles, and hidden rules are standard defense mechanisms. 1.

Bundled lock-in. Users are locked into one vendor (one plan, one API, etc) even though the best model for one task may not be the best for the next. (This changed with GPT-5, at least for OpenAI. It will soon become standard practice.)

Together users experience lower experimentation, interrupted workflows, and frustration when productivity gets throttled. The worst is users know they’re being throttled by unsustainable economics, not by software shortcomings.

A Market Design Problem

This is a market design problem. Subscription pricing was built for services where marginal costs are close to zero, which is not Generative AI. The only way to align incentives is to let costs flow directly with usage. (FWIW, Ed Zitron here argues why subscriptions are not only unsustainable, but doomed to fail.)

That means doing something known as “pricing at the edge.” It entails metering each request and compensating providers in real time. Let users decide how much they’re willing to spend without caps or forced averages.

Orchid GenAI’s Approach

Orchid GenAI is built with all of this in mind. Instead of subscriptions, it uses our probabilistic nanopayments — a system where each request is priced and settled instantly, without blockchain congestion or monthly bills.

The architecture has two distinct channels:

Inference path: standard HTTP requests that look just like OpenAI’s Chat Completions API. This means compatibility with tools like OpenWebUI out of the box.

Billing path: a WebSocket channel using nanopayments and short-lived bearer tokens. Importantly, this separates usage metering from inference, ensuring that billing is fast, private, and composable.

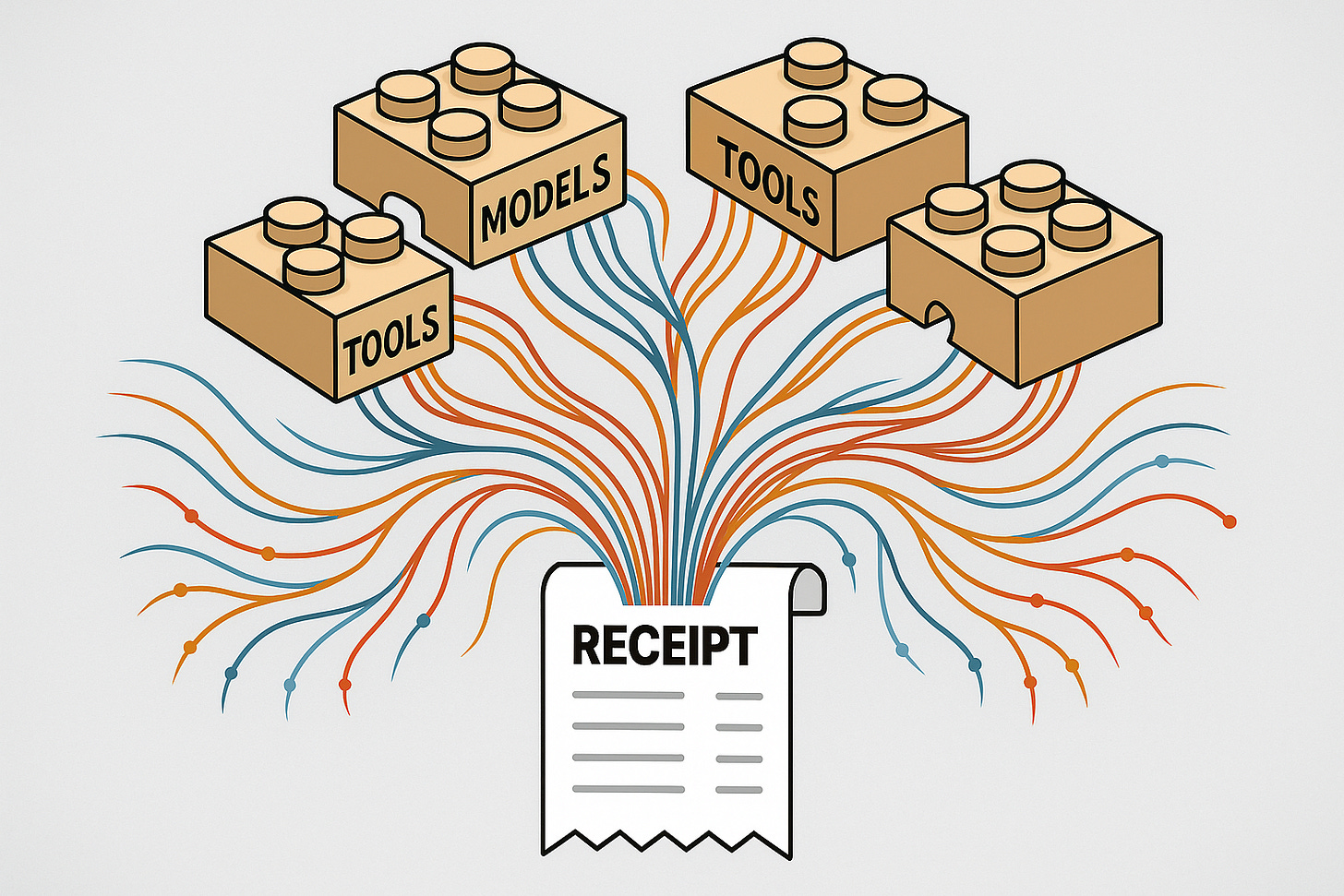

On top of this, Orchid adds a Tool Injection Framework, aligned with Anthropic’s Model Context Protocol (MCP). At the proxy layer, users can inject capabilities like web search, calculators, or retrieval pipelines into any supported model — Claude, GPT, Mistral, DeepSeek, you name it, the client need not change change. Tools can be toggled per session and per request.

What this creates is a real marketplace. Models and tools operate independently, so users build their own stack, routing requests where they want, paying only for what they use. Providers are compensated directly, without relying on averages.

What Changes for Users

For users, the biggest shift is psychological: no more arbitrary stop signs. If you want to run 10 workflows in a row, you can. If you only need one quick query, you pay for that and nothing more.

The flexibility makes experimentation practical. You can try five models in five minutes, keep the one that works best, and drop the rest without worrying about exhausting a quota. Session-level spend limits give you control over budget, and usage is visible in real time.

Just as important: you don’t have to change how you work. Orchid GenAI plugs into familiar interfaces. Your client can still look like ChatGPT. The difference is that behind the scenes, it’s modular, vendor-free, and priced fairly.

What Changes for Providers

Providers benefit too. Subscriptions force them to guard against outliers. With nanopayments, every token consumed is compensated instantly. Instead of imposing a global throttle, they can let usage flow and rely on economics to balance the load.

It also creates room for specialization. A niche tool — say, a domain-specific RAG pipeline — can earn revenue without having to build billing infrastructure, onboarding, or clients. The Orchid proxy handles it. Providers compete on latency, accuracy, and price, not just on who owns the customer relationship.

This turns the ecosystem into a genuine marketplace. Users assemble stacks. Providers plug in. Both sides transact on transparent terms.

Quick Math Example

Suppose a request costs $0.002 per 1,000 tokens, and a tool call costs $0.001. A session that uses three models and two tools produces a clear, additive bill. If it takes 10 such sessions to complete a project, the cost is obvious: tokens consumed × rate + tool calls × rate.

Heavy sessions cost more whereas light sessions cost less. Everyone sees the economics in real time, without mystery, cross-subsidies, ir hidden limits.

Objections and Safeguards

Three natural questions come up.

“Won’t I spend too much?” Users can set session or daily caps. Burn is visible in real time, so you always know what you’re paying.

“What about abuse?” Short-lived tokens, spend ceilings, and risk controls at the billing layer protect providers. Abuse doesn’t spiral because tokens expire quickly.

“Isn’t this complex?” Not really. Clients stay the same. You don’t install a new SDK or learn a proprietary schema. Orchid handles complexity at the proxy, not in your workflow.

The Bigger Picture

Rate limits are a symptom of mispriced usage. Subscriptions work for flat-cost services, not for Generative AI, where marginal costs matter.

Orchid GenAI fixes the economics by pricing requests at the edge. Nanopayments make real-time billing feasible. A modular marketplace gives users freedom, providers fair compensation, and the ecosystem room to specialize.

If you’ve ever been cut off mid-flow, you’ve felt the gap between how AI is sold and how it really works. Pay-per-moment pricing closes that gap. Stay in flow. Build the stack you want. And let the economics take care of themselves.